Synthetic Identities

How AI Accelerates Fraud Against Financial Institutions

📅 January 8, 2026

This article was written by IFI for the January/February 2026 issue of the ABA Risk and Compliance Magazine. Reproduced with permission.

📅 January 8, 2026

This article was written by IFI for the January/February 2026 issue of the ABA Risk and Compliance Magazine. Reproduced with permission.

Fraud is as old as commerce itself. In 300 BC, two Greek merchants conspired to sink their ship and collect the insurance money — perhaps history’s first recorded financial fraud. The plot failed when they were caught in the act: Hegestratos drowned while Zenothemis was sentenced to prison by a court in Athens.1 Since then, the methods used by fraudsters — and the countermeasures required by institutions — have significantly increased in sophistication and scale.

Fraud does not require artificial intelligence (AI), but AI can accelerate the scale and success rate for fraud.2

AI-powered tools can enable automation and enhance efficiency within illicit operations and economies, just as they can do in legitimate enterprises. The use of AI “deepfakes” to perpetrate fraud is increasing, particularly their use to circumvent identification and verification controls.

“Beginning in 2023 and continuing in 2024, FinCEN has observed an increase in suspicious activity reporting by financial institutions describing the suspected use of deepfake media in fraud schemes targeting their institutions and customers. These schemes often involve criminals altering or creating fraudulent identity documents to circumvent identity verification and authentication methods.” – Source FinCEN, Nov. 20243

To understand how AI is used in frauds targeting financial institutions and their customers, some key elements must be understood.

Synthetic identities

Synthetic identities are fictitious or partially fictitious personal identities. The identities can be fully fabricated using invented names, birthdates, and other identifiers, or they can be partially fabricated. Partially fabricated identities use combinations of authentic and falsified information, such as a real Social Security Number (SSN) compromised in a data breach, combined with a fake name. Synthetic identities can be used to open accounts which are subsequently used to perpetrate fraud, including credit or loan fraud, as well as to further illicit activity, such as being used to funnel funds as part of laundering.4

Shell companies

Shell companies are businesses that have legal structures but no real operations or assets. They have legitimate uses and are not by definition illegal — however, they are extensively used in money laundering, proliferation financing, sanctions evasion, tax evasion and other types of financial crime, according to the Financial Action Task Force (FATF) and FinCEN.5 Shell companies are often used in attempts to obscure the source, destination, purpose, and beneficiaries of illicit financial flows. They are appealing to criminal organizations because they involve minimal resources to set up and operate, and when authorities identify one company as being linked with illicit activity, a new one can be quickly opened, frequently with the same owner or at the same address.

AI is not required to set up shell companies: in 2017, Hasan Hakim Brown pleaded guilty to stealing $24 million in Covid-19 relief funds. He used data storage and virtualization machines to manufacture synthetic identities, automatically open bank accounts and shell companies, and monitor activity linked with them.6

The difference AI makes is that it enables synthetic identities or entities to be generated more rapidly. AI can also create identity and other information to make the synthetic identities and entities appear more credible, making detection more difficult.

Deepfakes

Deepfakes use AI to create highly realistic content that is difficult to distinguish from unmodified authentic information. In the financial services context, this can include generation of falsified documents, photographs, and videos, to circumvent customer identification and verification controls. It can also include authentic-looking documents to accompany a credit application including payslips, bank statements, utility bills, and phone bills.7

Deepfakes can also be used in evolutions of “CEO frauds,” in which an employee is induced to take actions by a person appearing to be a trusted or senior colleague — such as a C-suite or finance department executive — or to perpetrate frauds targeting customers, such as romance scams, family/emergency scams, and investment scams.

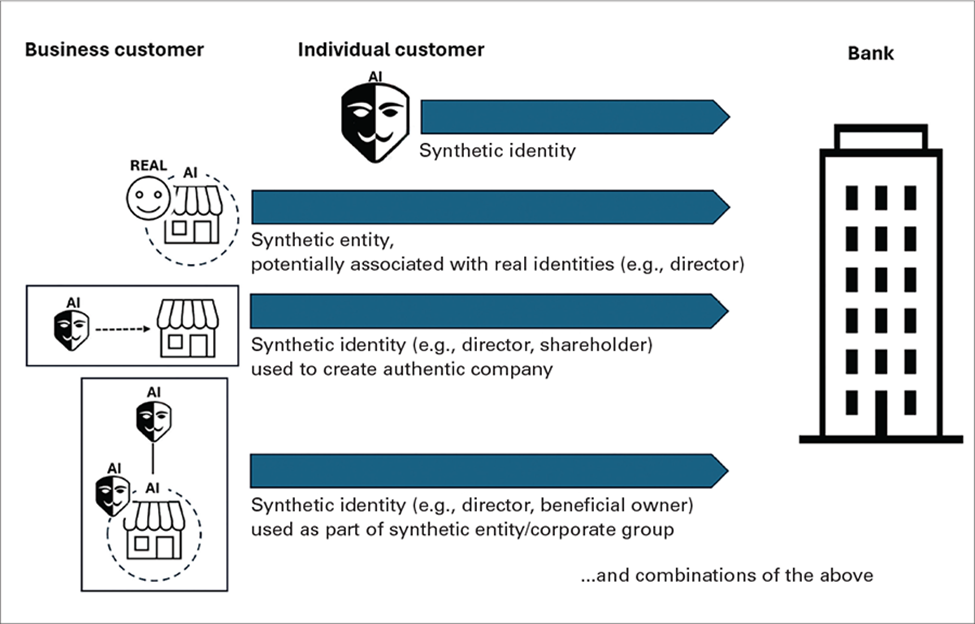

In addition to AI-generated or AI-enhanced identities and entities described above, the use of AI in fraud may be multi-layered. Figure 1 shows some of these possibilities: individuals associated with an entity customer may be synthetic, synthetic identities may be used to create a real company, or AI may be used to falsify multi-layered corporate groups. This presents new ways for illicit actors to avoid revealing their identities and to reduce the possibility of detection.

Figure 1. Examples of how AI-enabled fraud can be used to circumvent customer identification and verification

AI-enabled frauds are not theoretical: they are already occurring. FinCEN’s analysis of BSA data indicates that criminals have already used AI to generate falsified documents, photographs, and videos, including those used on drivers licenses and passport cards. These fraudulent identities have successfully been used to open accounts and receive and launder the proceeds of fraud and other illicit activities.8 Financial institutions should incorporate measures to identify AI-specific red flags throughout the client lifecycle.

Red flags during onboarding

Red flags that may indicate the use of AI-generated synthetic identities, entities, or documents include:9

Indicators that an image or video may be an AI-generated or modified fake include:10

Technology solutions can also be used to identify indicators of potential deepfakes, such as metadata examination tools. For example, intact metadata is indicative of authenticity, whereas where some metadata has been stripped, it indicates that the media was potentially manipulated and further investigation is required.11

Red flags during the client lifecycle

The objective of opening an account using a synthetic identity — whether an individual or entity/business account — is to undertake illicit activity such as credit fraud or laundering criminal proceeds. During the client lifecycle, red flags are not necessarily specific to AI-enabled activity. For example, building trust and credit history over an extended period ahead of maxing out credit lines and defaulting, called “bust out fraud,” is not unique to AI-enabled or synthetic identities and accounts. Additional risk management measures can enhance identification and action on AI-enabled frauds are described below in Action 3. Ongoing monitoring and assessment

Table 1 : Key Resources

Regulators and law enforcement agencies have published reports detailing methodologies and examples of AI-generated deepfakes, including red flags, and how to identify and mitigate these risks.

| Date | Agency | Comment/Link |

|---|---|---|

| Not Specified | Federal Reserve | Detailed resources to identify and response to synthetic identity fraud risks Synthetic Identity Fraud Mitigation Toolkit https://fedpaymentsimprovement.org/resources/synthetic-identity-fraud-mitigation-toolkit/ |

| January 2025 | U.S. Department of Homeland Security (DHS) | Includes sections on deepfake detection methods and heuristics for identifying deepfakes Impacts of Adversarial Use of Generative AI on Homeland Security https://www.dhs.gov/sites/default/files/2025-01/25_0110_st_impacts_of_adversarial_generative_aI_on_homeland_security_0.pdf |

| December 2024 | Commodity Futures Trading Commission (CFTC) | Sets out requirements under CFTC regulations that could be implicated by potential AI uses and risks Use of AI in CFTC-Regulated Markets https://www.cftc.gov/PressRoom/PressReleases/9013-24 |

| December 2024 | Federal Bureau of Investigation (FBI) | Provides examples of how criminals may use AI-Generated Text, AI-Generated Images, AI-Generated Audio, and AI-Generated Videos Criminals Use Generative AI to Facilitate Financial Fraud https://www.ic3.gov/PSA/2024/PSA241203%23retfn3 |

| November 2024 | Financial Crimes Enforcement Network (FinCEN) | Includes sections on detecting and mitigating deepfake identity documents, and financial red flag indicators of deepfake media abuse Alert on Fraud Schemes Involving Deepfake Media Targeting Financial Institutions https://www.fincen.gov/system/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf |

| November 2024 | National Credit Union Association(NCUA) | Contains section on managing risks from the use of AI, including preventive controls, monitoring and auditing, and termination procedures NCUA Artificial Intelligence Compliance Plan https://ncua.gov/ai/ncua-artificial-intelligence-compliance-plan |

| September 2024 | Office of the Superintendent of Financial Institutions (OSFI) and the Financial Consumer Agency of Canada (FCAC) (Canada) | Outlines key risks that arise for financial institutions from AI, based in part on findings from a survey of federally regulated financial institutions Risk Report: AI Uses and Risks at Federally Regulated Financial Institutions https://www.osfi-bsif.gc.ca/en/about-osfi/reports-publications/osfi-fcac-risk-report-ai-uses-risks-federally-regulated-financial-institutions |

| April 2024 | FinCEN | Details emerging risks, typologies, and red flag indicators to identify theft and fraud schemes involving counterfeit U.S. passport cards Notice on the Use of Counterfeit U.S. Passport Cards to Perpetuate ID Theft and Fraud Schemes at Financial Institutions https://www.fincen.gov/sites/default/files/shared/FinCEN_Notice_Counterfeit_US_Passport_FINAL508.pdf |

| March 2024 | Competition Bureau Canada | News release with stats on fraud cases, what fraudsters are using AI for, red flags to watch for, and how to protect yourself The Rise of AI: Fraud in the Digital Age https://www.canada.ca/en/competition-bureau/news/2024/03/the-rise-of-ai-fraud-in-the-digital-age.html |

| January 2024 | U.S. Securities and Exchange Commission (SEC) | Includes section on AI-enabled technology used to scam investors, including deepfake video and audio

Artificial Intelligence (AI) and Investment Fraud: Investor Alert |

| January 2024 | FinCEN | Provides threat pattern and trend information on identity-related suspicious activity based on BSA data filed with FinCEN from Jan — Dec 2021. Financial Trend Analysis: Identity-Related Suspicious Activity—2021 Threats and Trends https://www.fincen.gov/sites/default/files/shared/FTA_Identity_Final508.pdf |

| September 2023 | National Security Agency (NSA), FBI, Cybersecurity and Infrastructure Security Agency (CISA) | Describes trends and case studies of GenAI, and recommendations for defending against deepfakes Contextualizing Deepfake Threats to Organizations https://media.defense.gov/2023/Sep/12/2003298925/-1/-1/0/CSI-DEEPFAKE-THREATS.PDF |

| 2021 | DHS | Developed by a Public-Private Analytic Exchange Program, includes case studies and scenarios of social engineering attacks Increasing Threat of Deepfake Identities https://www.dhs.gov/sites/default/files/publications/increasing_threats_of_deepfake_identities_0.pdf |

An effective response to fraud requires action throughout an institution. While the use of AI and deepfakes in the context of client due diligence has been described in detail above, staff throughout the institution may be targeted by other types of AI-enhanced attacks.

Deepfake-Enhanced Frauds: CEO Fraud Cases

In addition to the use of AI to circumvent client due diligence, AI enhances the scale and success of other frauds too. These frauds can target financial institution staff as well as customers. Significant losses have already occurred in deepfake-enhanced “CEO frauds,” such as the well-known fraud against Arup, discussed in the article Deepfake Deepdive.

In CEO frauds, AI deepfakes are used to target businesses, including companies, suppliers and business partners. The same methodologies also can be used to create more personalized and credible romance scams, family/medical emergency scams, and investment frauds. For example, in a family scam, a fraudster may use a deepfake voice or video to impersonate a target’s family member to gain trust and establish credibility.

According to the Department of Homeland Security, National Security Agency, Federal Bureau of Investigation, and Cybersecurity and Infrastructure Security Agency, deepfakes can also be used against institutions for objectives including:

Impersonating executives to damage brands.

AI-Enhanced National Security and Sanctions Risks to Banks: North Korean Remote IT Workers

Financial institutions may also face national security and sanctions risks in their hiring processes due to sophisticated use of AI. The FBI has identified that North Korean IT workers are gaining employment at U.S. companies as remote workers to generate illicit revenues for the regime. AI and face-swapping technology has been used in job interviews to hide their true identity.12 In addition to generating revenues from their salaries, the North Korean workers can install unauthorized remote access software, compromise security, gain access to sensitive data, and steal proprietary code. Some companies have been extorted by holding proprietary data and code hostage until ransoms are paid.

In July 2025, Christina Marie Chapman, from Arizona, was convicted for her role in assisting North Korean workers to get remote IT positions at 309 U.S. companies.13 The fraud involved theft of the identities of 68 U.S. persons and generated at least $17.1 million in revenue for North Korea. In similar cases, defendants are alleged to have used shell companies with corresponding websites and accounts to make it appear as though the remote IT workers were affiliated with legitimate U.S. businesses.

In addition to the payment of salaries being a contravention of North Korea sanctions by the institution involved, it also represents potential compromise of critical private sector financial and commercial infrastructure, which adversely impacts economic security.

AI-enabled fraud, like many other types of fraud, intersects multiple teams within an organization including cyber, fraud, AML, and credit risk. To ensure a unified response, the organization should establish close cooperation, such as working groups with representatives from all relevant departments. This ensures knowledge is shared, while also minimizing duplication and gaps. Datasets should be integrated to provide a more complete view which better enables anomalies to be identified.

For example:

Financial institutions should be vigilant and continuously review and adapt their anti-fraud countermeasures as methodologies evolve. Sources of information include internal investigations, law enforcement and regulatory advisories, and sharing information within industry.

Policies, processes, systems, and controls should be reviewed and designed to manage AI-specific risks. For example:

Financial institutions can also consider the use of analytics and AI to enhance their anti-fraud measures — using AI as a “shield” against its use by fraudsters as a “sword”.

Ongoing monitoring and assessment are key to identifying fraud, as well as preventing further fraud. Some risk management measures that can enhance identification and action on AI-specific frauds throughout the client lifecycle include:14

If fraud losses occur (or there is a “near miss”) or other suspicious activity is identified, further analysis should be performed to identify the methodologies used and whether any other accounts show the same characteristics. They too may be part of a wider fraud scheme. For example, for a confirmed credit card fraud, were the repayments made from an account which has also remitted payments for other credit cards in different names?

The Federal Reserve provides valuable resources including a useful checklist on post-loss analysis: https://fedpaymentsimprovement.org/wp-content/uploads/identifying-existing-synthetics-post-loss.pdf

While institutions should use “red flags” to identify risk indicators for fraud, they should also ensure these processes are applied responsibly. For example, well-established indicators of a falsified SSN include: the SSN that does not correspond with the age of a person, the absence of a credit score, or a short address history. While these are red flags for a falsified SSN, they are also consistent with a valid SSN for a person recently arrived in the United States.

Fraud solutions should also include “negative indicators” and/or manual validation to identify whether the fraud red flags are a “false positive.” For example, in the scenario above, the person may have opened their account with a passport and visa showing their arrival date in the United States. Imprecise application of red flags without adequate human oversight adversely impacts financial inclusion, and over-reliance on automated systems may even result in litigation, regulatory penalties, and financial failure of the business.15

Financial institutions should provide training to their staff specifically on AI fraud. To enhance relevance and retention of knowledge, training should be customized for the fraud risks faced by each group of staff, for example:

All staff should be trained on the actions required if they identify suspected or actual fraud.

In addition to training staff, financial institutions should consider customer awareness campaigns which include information on how AI can be used to perpetrate fraud. This enhances the customer relationship by providing useful resources. It also reduces customer vulnerability to fraud — and the potential for financial institutions to incur losses or to be held accountable by regulators for having inadequate controls to protect their customers. (See the sidebar “Bank responsibilities for defrauded customers: Best practices.”)

For example, awareness campaigns for corporate customers could focus on CEO frauds and how their business might be targeted. For business accounts, any change to payee information should trigger an out-of-band confirmation — the same type of control used in business email compromise (BEC) prevention. Individual customers could be educated on elder fraud, romance scams, and family/medical emergency scams, among others. Customers could also be encouraged to learn the signs that their family members or friends might be targeted so that they can more effectively intervene and support.

Financial institutions should share information with each other where permitted by regulation, as well as reporting it to appropriate U.S. government agencies. This increases awareness of fraud methodologies as well as specific fraud schemes, enabling them to be more quickly identified and contained, as well as supporting law enforcement investigations. For example:

Bank Responsibilities for Defrauded Customers: Best Practices

Regulators increasingly expect financial institutions to have effective measures in place to protect their customers from fraud and to take effective action to support customers if fraud occurs. This position recognizes that banks have extensive resources and data available, enabling them to identify patterns across their customer base, whereas each customer has access to only their account information and is rarely a fraud.

As AI increases the sophistication of frauds, and therefore the likelihood that customers may become victims, financial institutions would be well-advised to ensure their anti-fraud programs include effective customer protection measures. Based on action taken by regulators, their expectations of financial institutions include:

Fraud generates billions in proceeds every year, and the use of AI significantly increases the speed, scale, and likelihood of success. In particular, synthetic and deepfake identities and entities amplify fraud threats to financial institutions of all sizes, providing not just opportunities for fraud but also accounts that can be used to launder the illicit proceeds of other crimes. Financial institutions must take action in response. Fraud is not new, nor is financial institutions’ successful track record of adapting and responding to new forms of crime. Institutions that take action, remain informed, and include AI-specific measures in their programs, can significantly reduce risk for their institution, staff, and customers.

[1] ‘Fraudster Tales: History’s Greatest Financial Criminals and Their Catastrophic Crimes’ by Vijay Narayan Govind (2024)

[2] https://www.signicat.com/press-releases/42-5-of-fraud-attempts-are-now-ai-driven-financial-institutions-rushing-to-strengthen-defences; https://www.deloitte.com/us/en/insights/industry/financial-services/deepfake-banking-fraud-risk-on-the-rise.html; https://fedpaymentsimprovement.org/wp-content/uploads/sif-synthetic-money-mules.pdf

[3] https://www.fincen.gov/sites/default/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf

[5] https://www.fatf-gafi.org/en/publications/Fatfrecommendations/Guidance-Beneficial-Ownership-Legal-Persons.html; https://www.fincen.gov/news/news-releases/fincen-issues-final-rule-regarding-access-beneficial-ownership-information

[7] https://fedpaymentsimprovement.org/wp-content/uploads/sif-toolkit-genai.pdf

[8] https://www.fincen.gov/sites/default/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf

[9] https://www.fincen.gov/sites/default/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf; https://fedpaymentsimprovement.org/wp-content/uploads/use-case-credit-union-organization-link-analysis.pdf

[10] https://www.fincen.gov/sites/default/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf; https://media.defense.gov/2023/Sep/12/2003298925/-1/-1/0/CSI-DEEPFAKE-THREATS.PDF; https://www.dhs.gov/sites/default/files/publications/increasing_threats_of_deepfake_identities_0.pdf

[11] https://media.defense.gov/2023/Sep/12/2003298925/-1/-1/0/CSI-DEEPFAKE-THREATS.PDF

[12] https://www.ic3.gov/PSA/2024/PSA240516 and https://www.ic3.gov/PSA/2025/PSA250123

[14] https://fedpaymentsimprovement.org/wp-content/uploads/identifying-existing-synthetics-within-portfolio.pdf; https://fedpaymentsimprovement.org/resources/synthetic-identity-fraud-mitigation-toolkit/how-synthetic-identities-are-used/; https://www.threatmark.com/wp-content/uploads/2025/05/ThreatMark-AI-vs-Fraud-The-Future-of-Fraud-Defense-Whitepaper.pdf

[15] https://www.fca.org.uk/news/press-releases/fca-censures-amigo-failing-conduct-adequate-affordability-checks; https://www.amigoscheme.co.uk/

Designed for compliance, risk, and financial crime professionals, our Foundations of Anti-Fruad course delivers a practical overview of fraud risks and prevention strategies. Learners gain insight into global regulatory expectations, emerging fraud-enabling technologies, third-party fraud risks, and the essential components of an effective fraud prevention framework—reinforced through real enforcement and bank fraud case studies.

Top 10: Cartel Finance & Chinese Money Laundering Networks

Top 10: Cartel Finance & Chinese Money Laundering NetworksThis site uses cookies. By continuing to browse the site, you are agreeing to our use of cookies.

Accept settingsHide notification onlySettingsWe may request cookies to be set on your device. We use cookies to let us know when you visit our websites, how you interact with us, to enrich your user experience, and to customize your relationship with our website.

Click on the different category headings to find out more. You can also change some of your preferences. Note that blocking some types of cookies may impact your experience on our websites and the services we are able to offer.

These cookies are strictly necessary to provide you with services available through our website and to use some of its features.

Because these cookies are strictly necessary to deliver the website, refusing them will have impact how our site functions. You always can block or delete cookies by changing your browser settings and force blocking all cookies on this website. But this will always prompt you to accept/refuse cookies when revisiting our site.

We fully respect if you want to refuse cookies but to avoid asking you again and again kindly allow us to store a cookie for that. You are free to opt out any time or opt in for other cookies to get a better experience. If you refuse cookies we will remove all set cookies in our domain.

We provide you with a list of stored cookies on your computer in our domain so you can check what we stored. Due to security reasons we are not able to show or modify cookies from other domains. You can check these in your browser security settings.

These cookies collect information that is used either in aggregate form to help us understand how our website is being used or how effective our marketing campaigns are, or to help us customize our website and application for you in order to enhance your experience.

If you do not want that we track your visit to our site you can disable tracking in your browser here:

We also use different external services like Google Webfonts, Google Maps, and external Video providers. Since these providers may collect personal data like your IP address we allow you to block them here. Please be aware that this might heavily reduce the functionality and appearance of our site. Changes will take effect once you reload the page.

Google Webfont Settings:

Google Map Settings:

Google reCaptcha Settings:

Vimeo and Youtube video embeds:

You can read about our cookies and privacy settings in detail on our Privacy Policy Page.

Privacy Policy